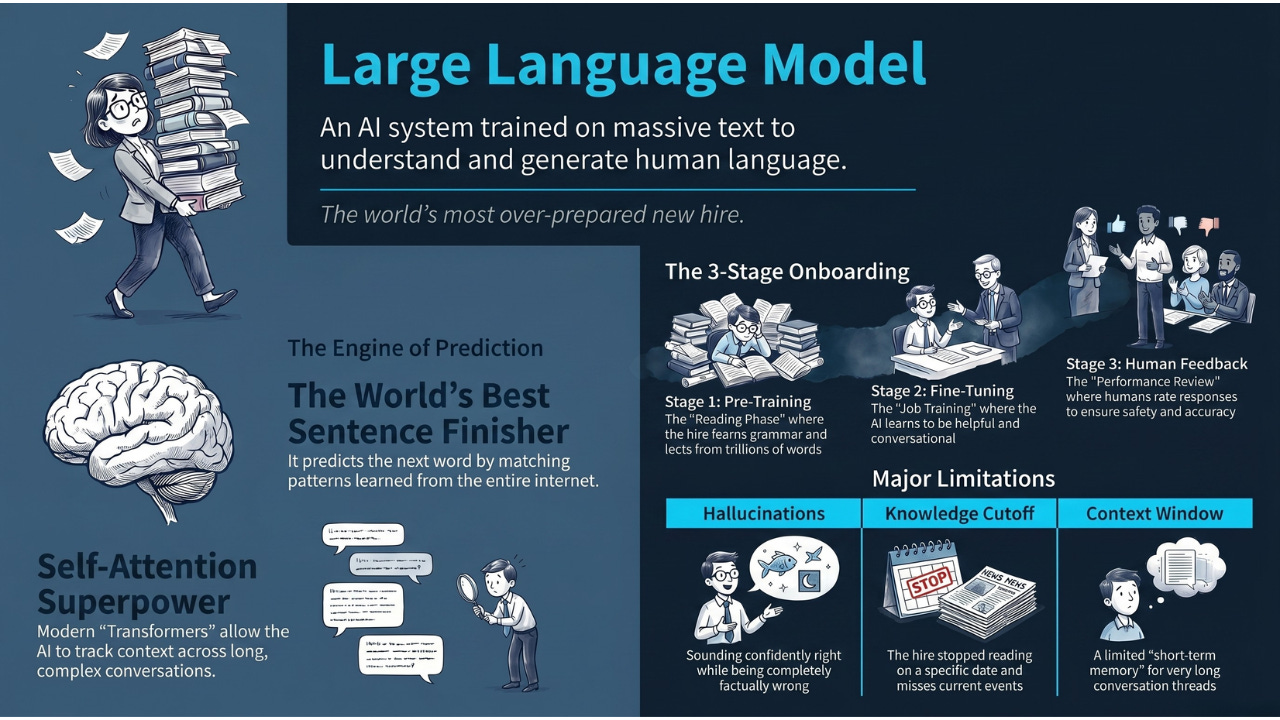

A Large Language Model (LLM) is an AI system trained on massive amounts of text to understand and generate human language. Think of it as the world’s most over-prepared new hire, one who read every document on the internet before their first day.

Hey Common Folks!

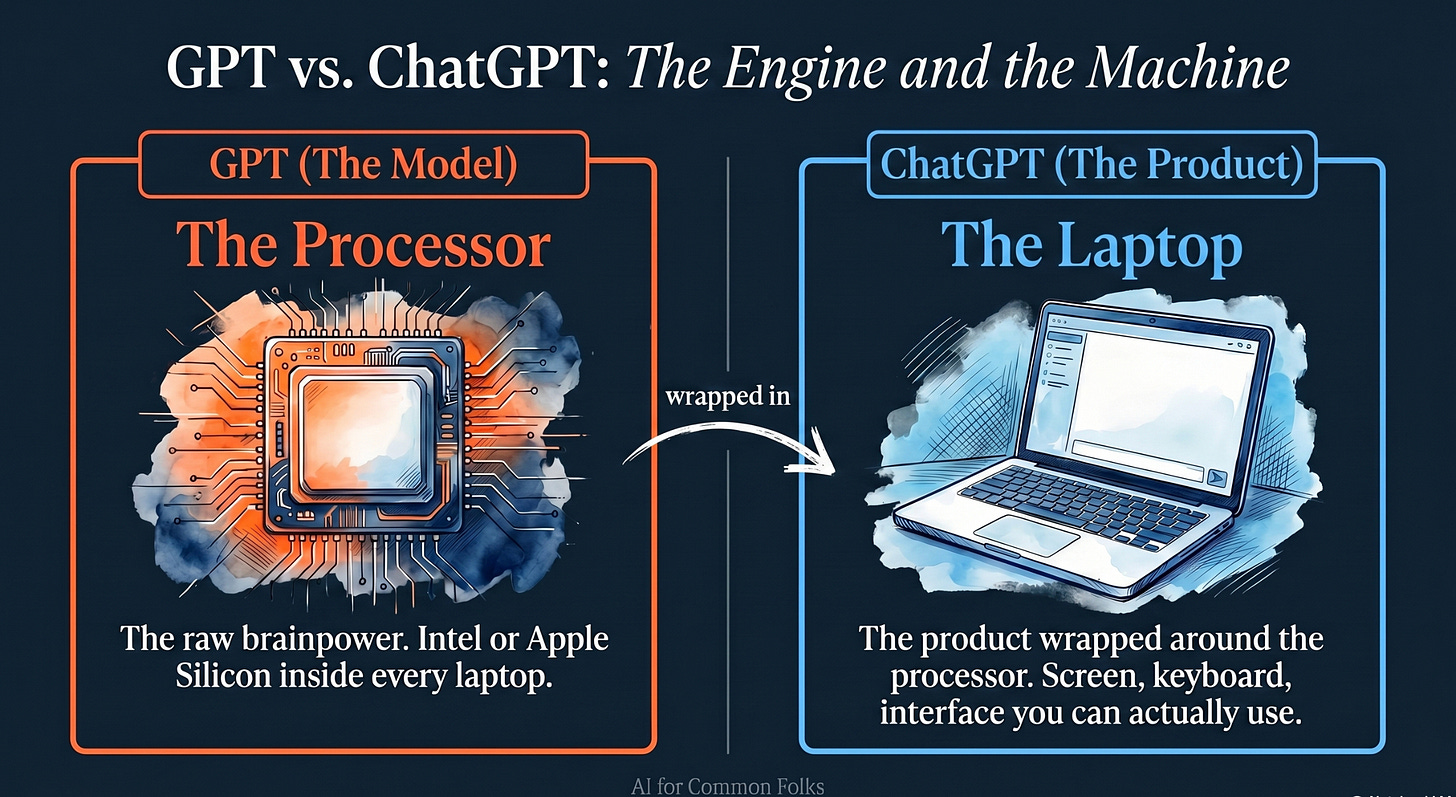

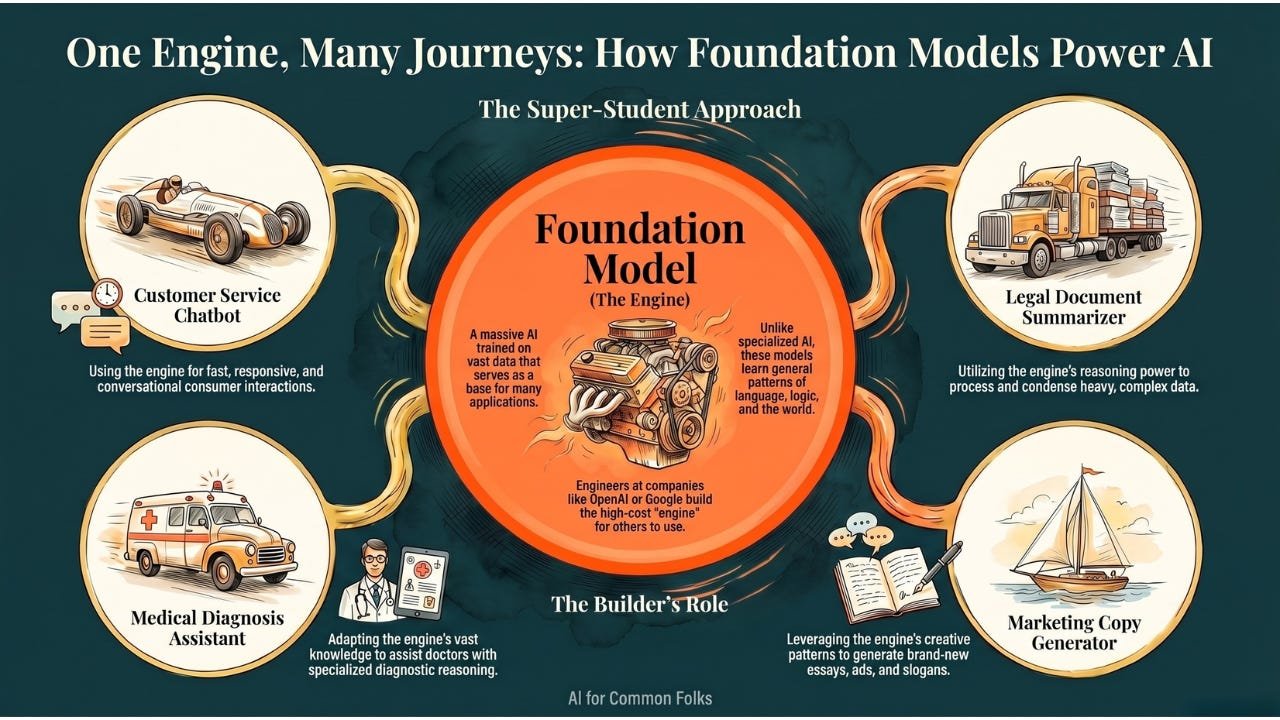

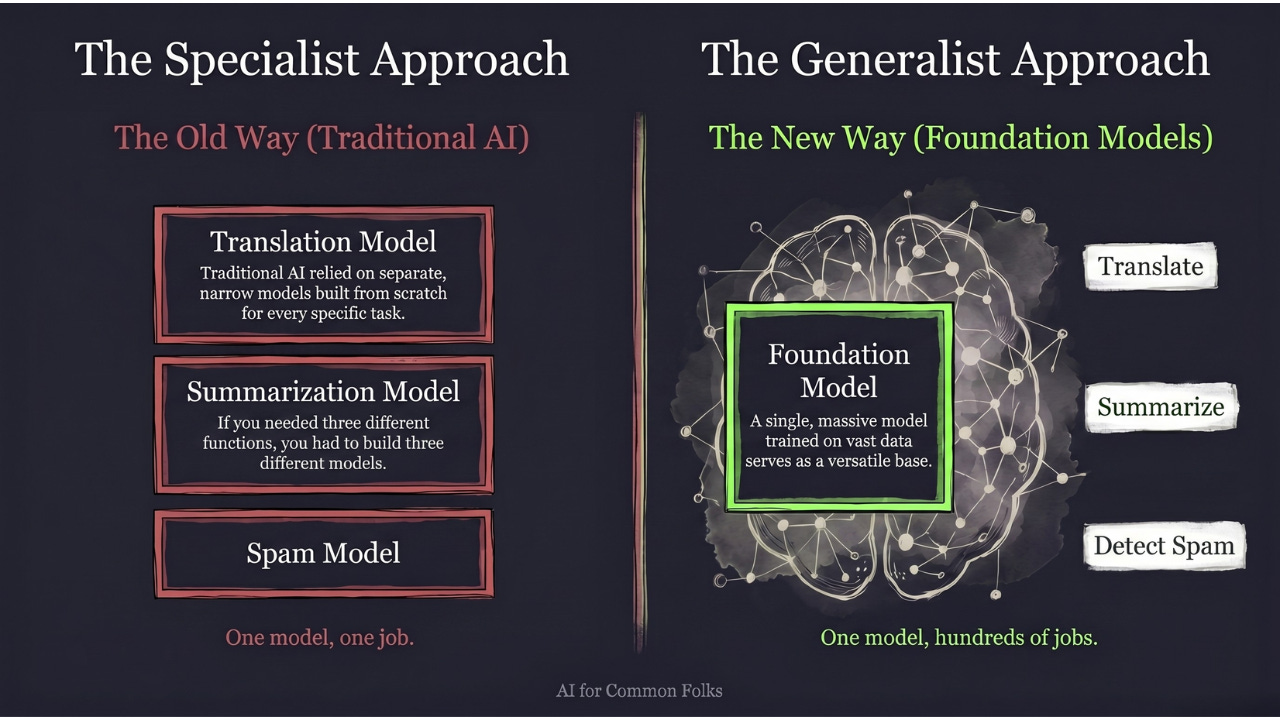

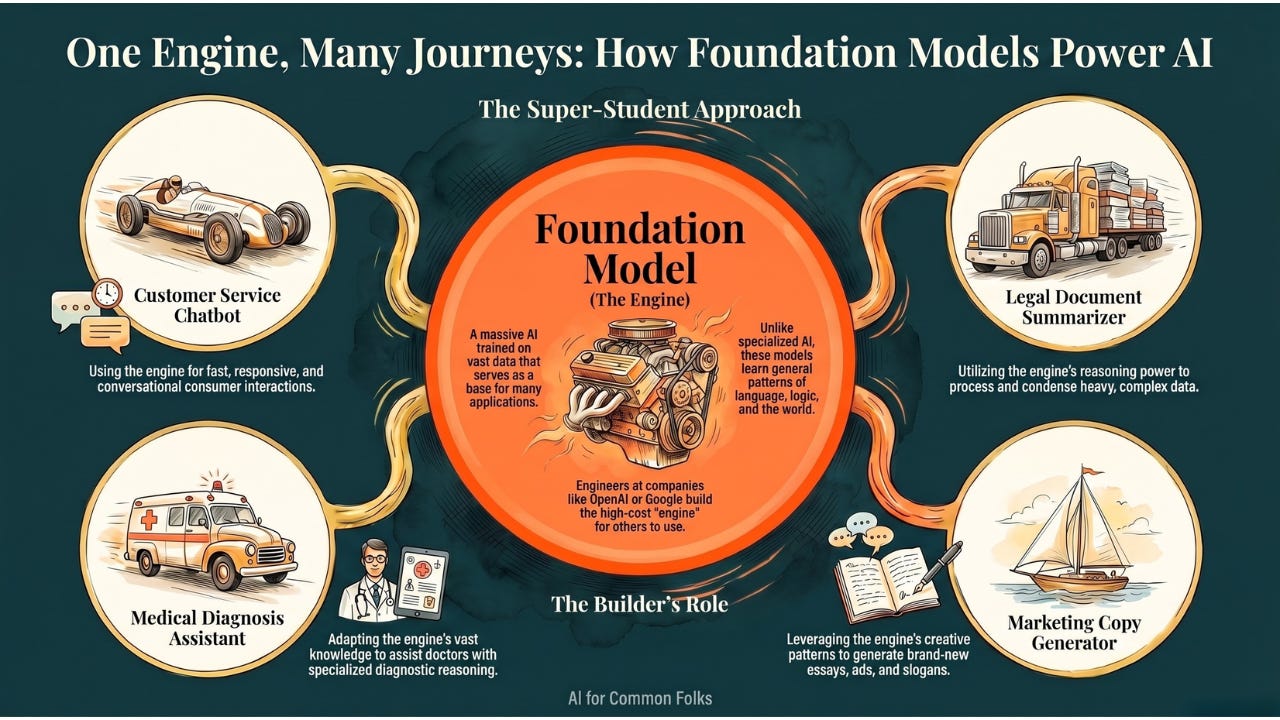

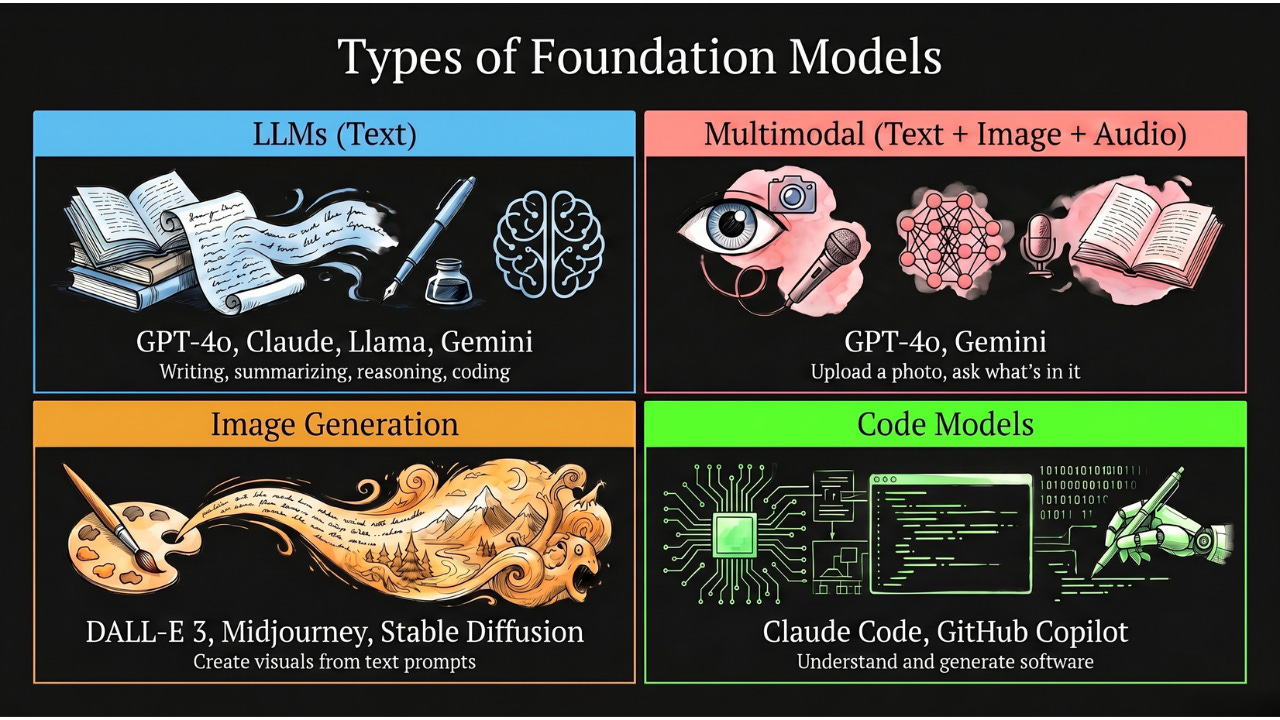

In our last two articles, we covered Foundation Models, the massive general-purpose AI brains, and GPT, the most famous family in that category. We talked about the Swiss Army Knives of AI and the three-letter recipe (Generative, Pre-trained, Transformer) that cracked modern language AI.

But GPT is just one example of a broader category. Claude, Gemini, Llama, DeepSeek — these are all in the same family. That family is called Large Language Models, or LLMs.

And LLMs are the specific technology powering every AI chatbot you’ve ever used.

The best way to understand one is to think of it as a new employee at your company. A very unusual one.

Meet the New Hire

Imagine your company just hired someone. Before their first day, they did something no human could do: they read every email, every Slack message, every report, every meeting note, every document your company has ever produced. Not just your company, actually. Every company. Every book. Every website. Every Wikipedia article. Every Reddit thread. Every piece of code on GitHub.

They didn’t understand all of it the way you would. They didn’t form opinions or have experiences. But they noticed patterns. They noticed that after “Dear” people usually write a name. That after “quarterly revenue increased” people usually write “by” followed by a percentage. That when someone asks “how do I” the next words are usually a task, followed by step-by-step instructions.

This new hire didn’t memorize facts like a textbook. They memorized how language flows. They can finish anyone’s sentence, in any department, on any topic, because they’ve seen millions of similar sentences before.

That’s an LLM. That’s the whole idea.

The World’s Best Sentence Finisher

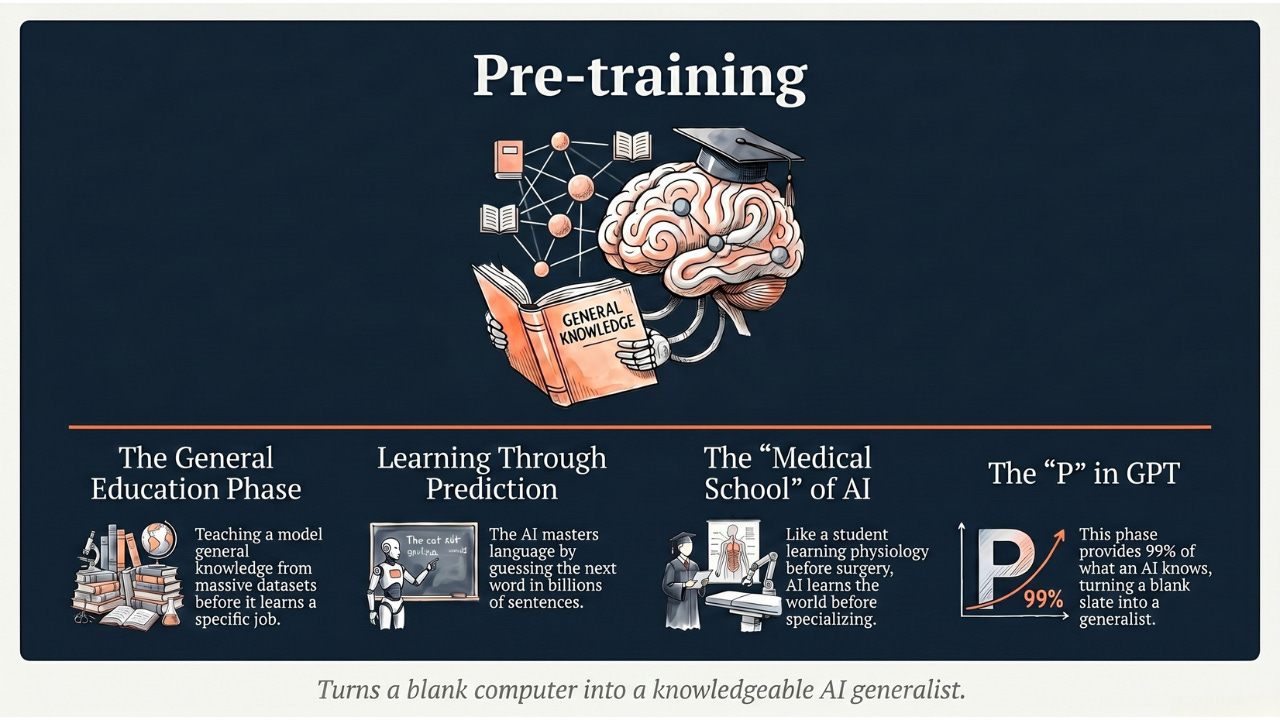

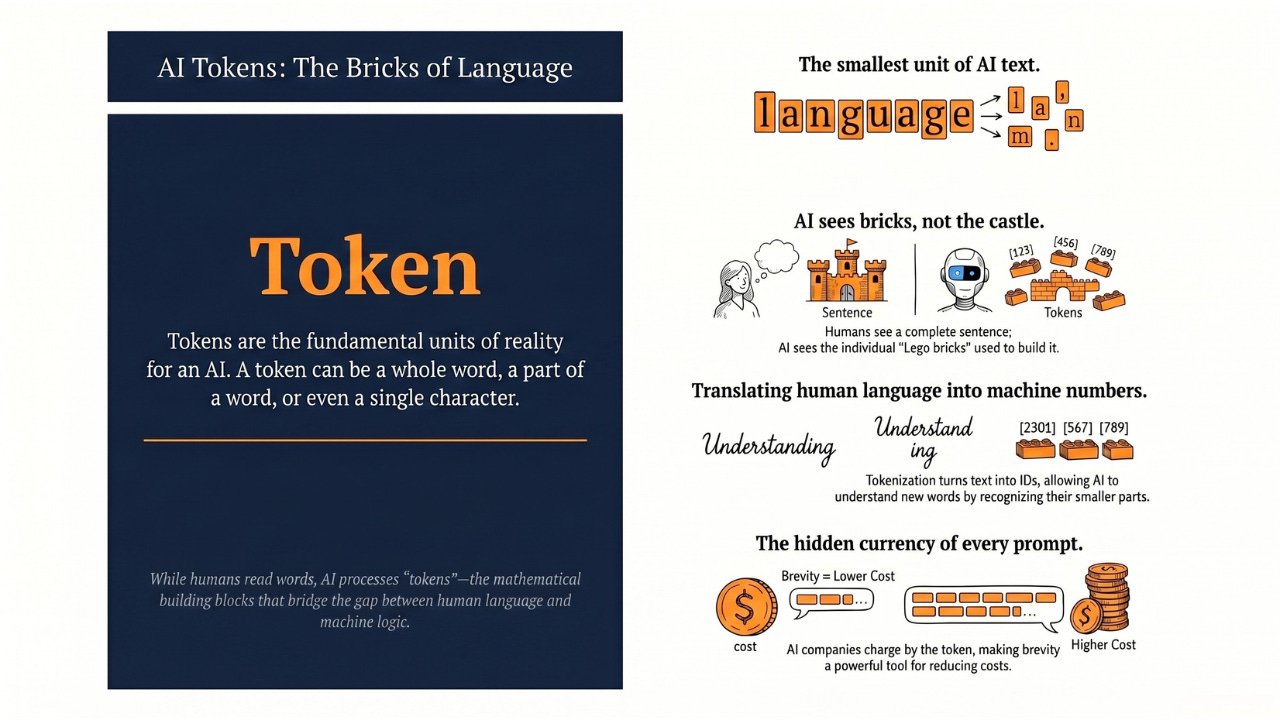

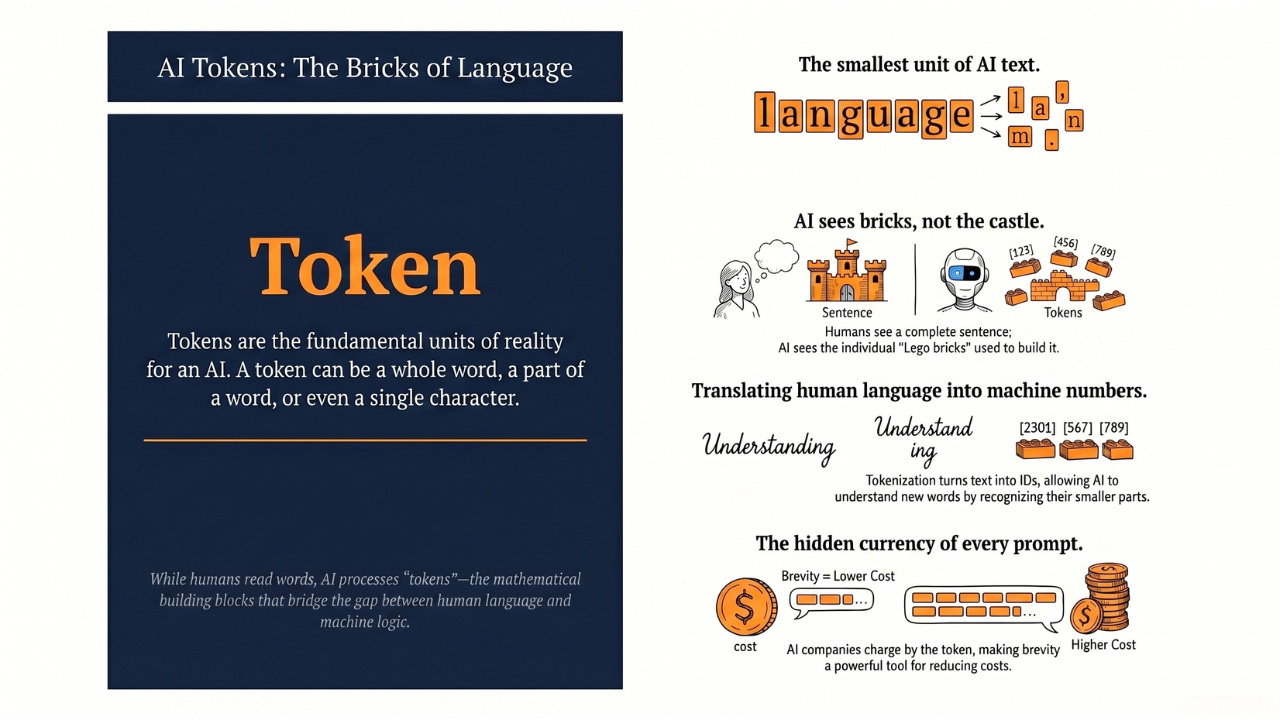

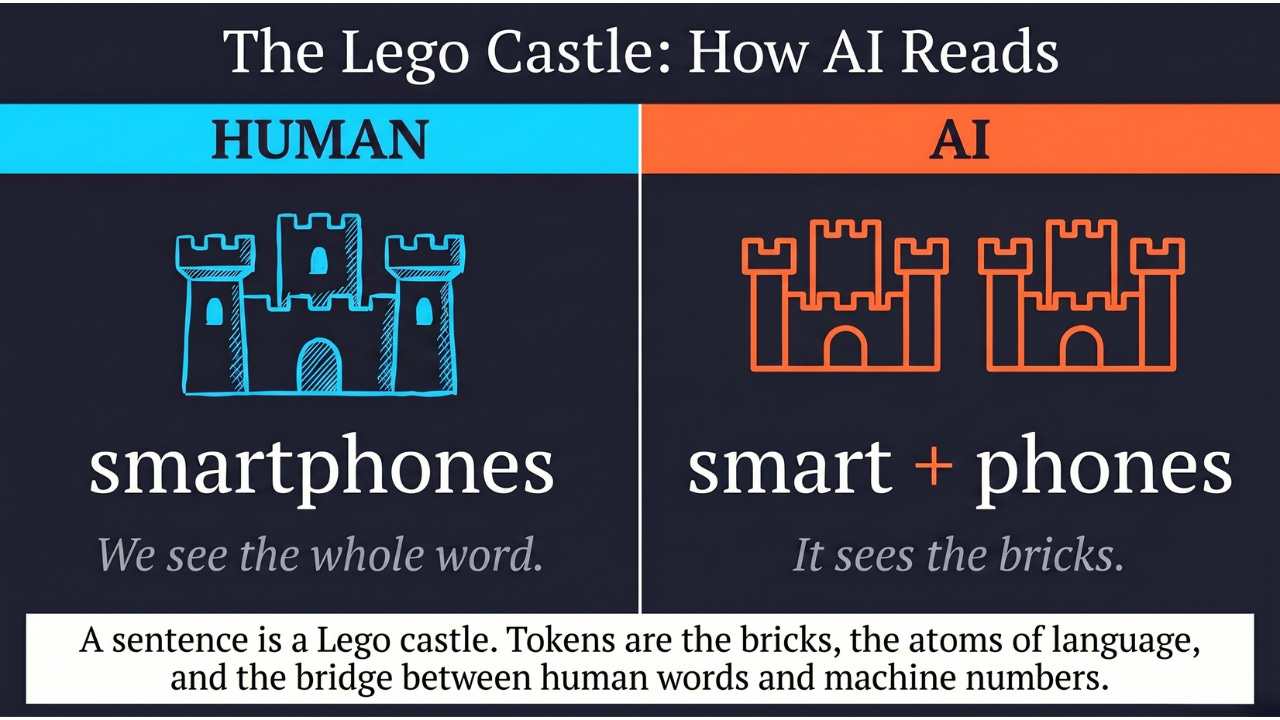

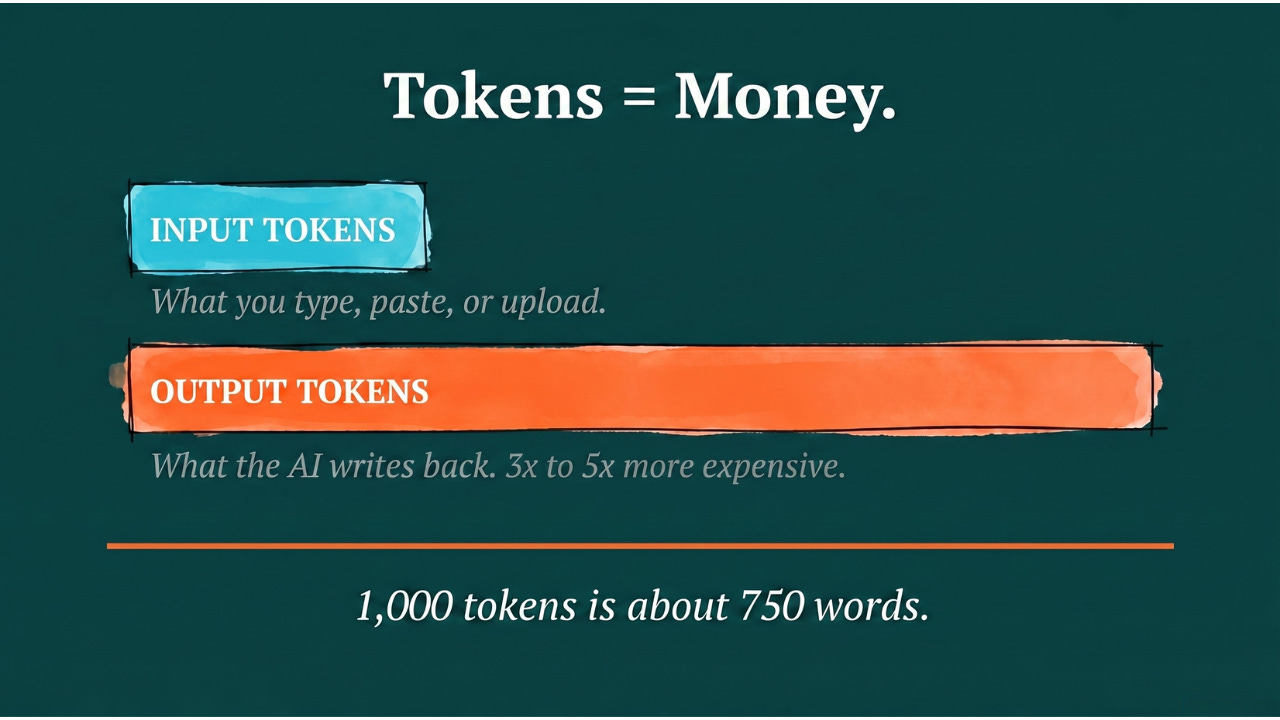

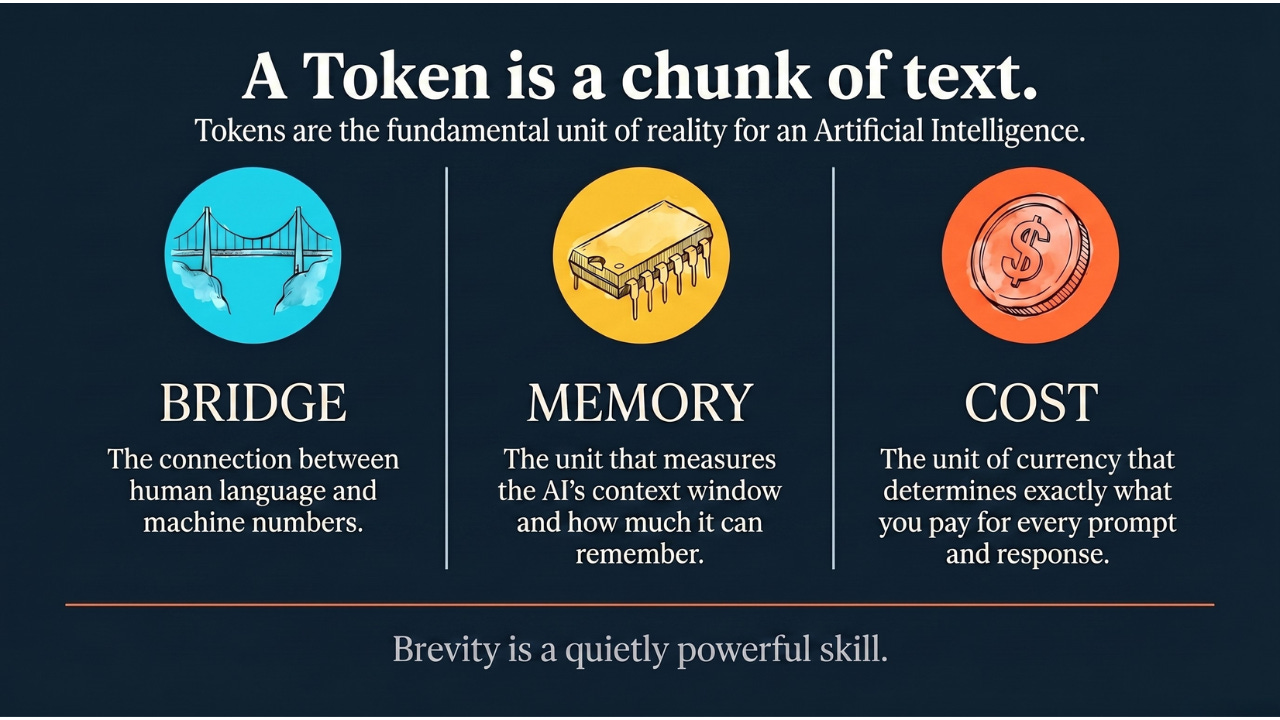

At its core, an LLM does one thing: predict the next word. (Technically, it predicts the next token — a small chunk of text that’s usually a word or part of a word. We’ll cover tokens in a future article. For now, “word” is close enough.)

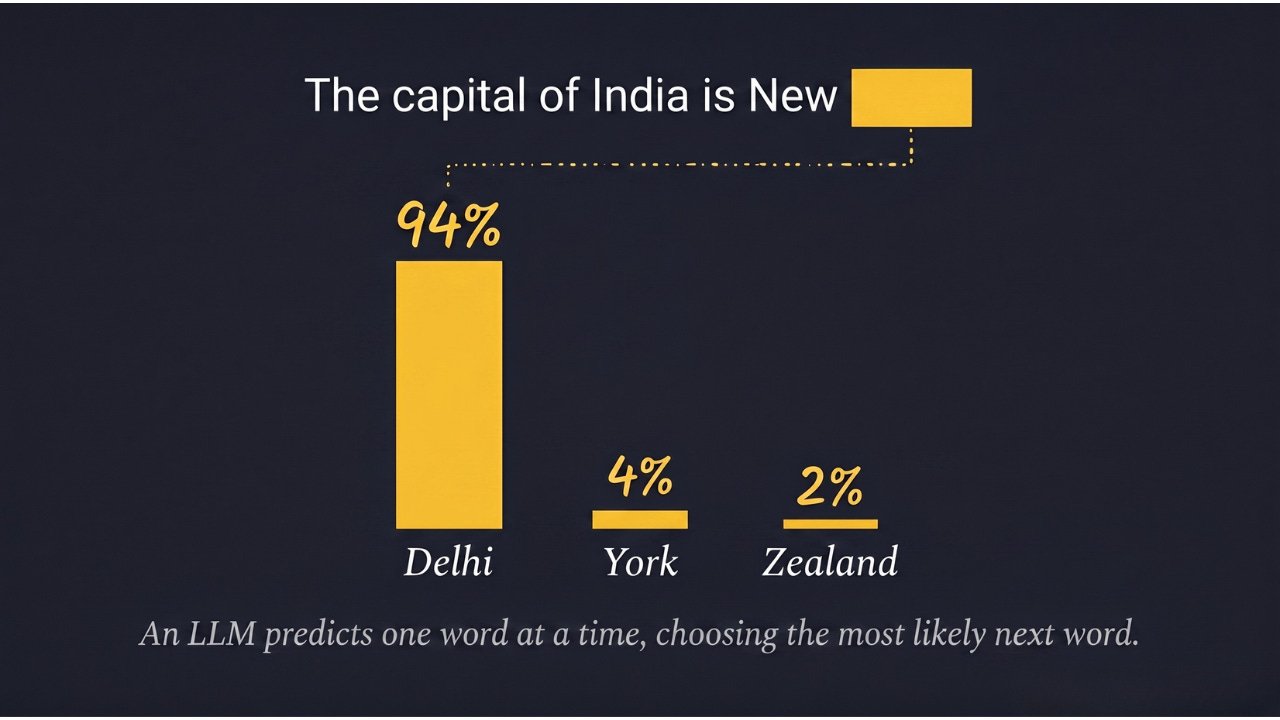

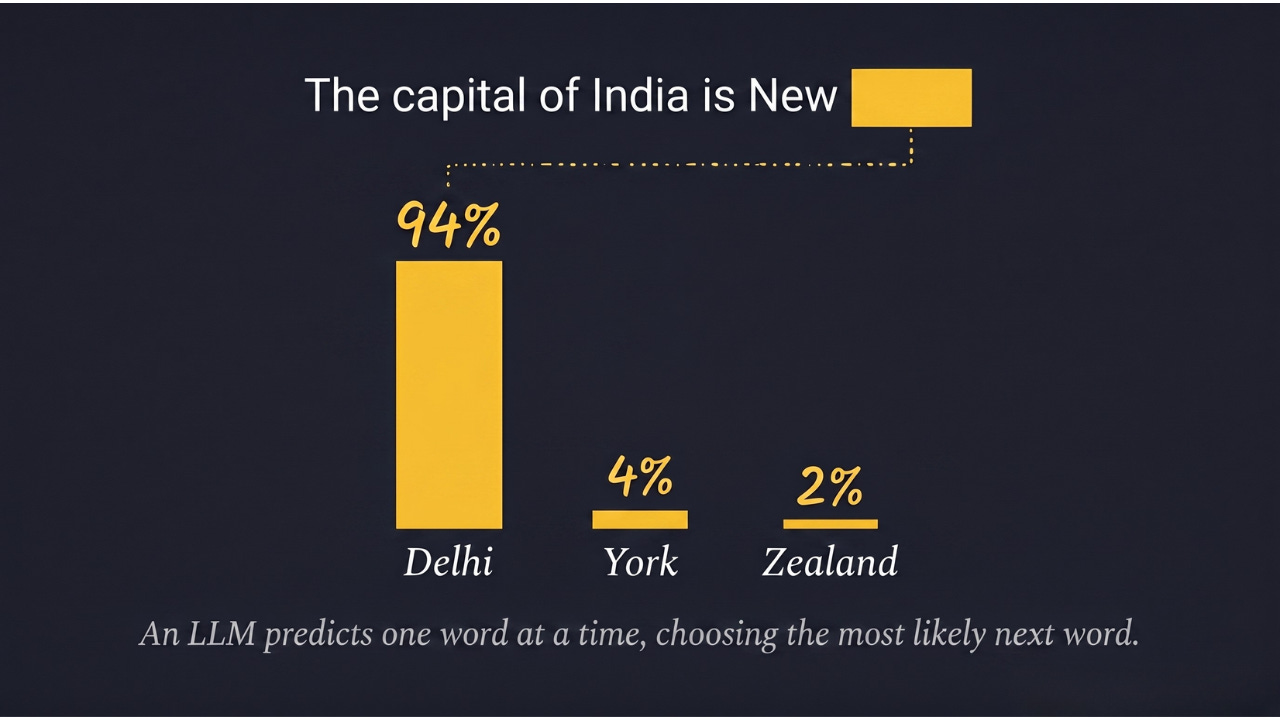

You actually do this too. If I say: “The capital of India is New…”

Your brain instantly completes: “Delhi.”

You didn’t look it up. You’ve seen those words together enough times that the completion is automatic.

Your phone does this too. You type “I am on my…” and your keyboard suggests “way.” Your phone learned this pattern from your text messages.

Now scale that up dramatically.

Your phone looks at the last 3 words to guess the next one. An LLM looks at the last 300,000 words. Your phone learned from your texts. An LLM learned from the entire internet.

Back to our new hire analogy: imagine asking them to finish this sentence: “Based on our Q3 projections and the current market conditions, the board recommends that we…”

Because they’ve read millions of similar corporate emails, they know what typically comes next. Not because they understand finance. Because they’ve seen this pattern thousands of times. They’re pattern-matching at a scale no human could match.

That’s how ChatGPT writes entire paragraphs. One word at a time, each chosen because it’s the most likely continuation of everything before it. Like a new hire who’s so well-read that they can finish any sentence in any department.

How the New Hire Follows Conversations

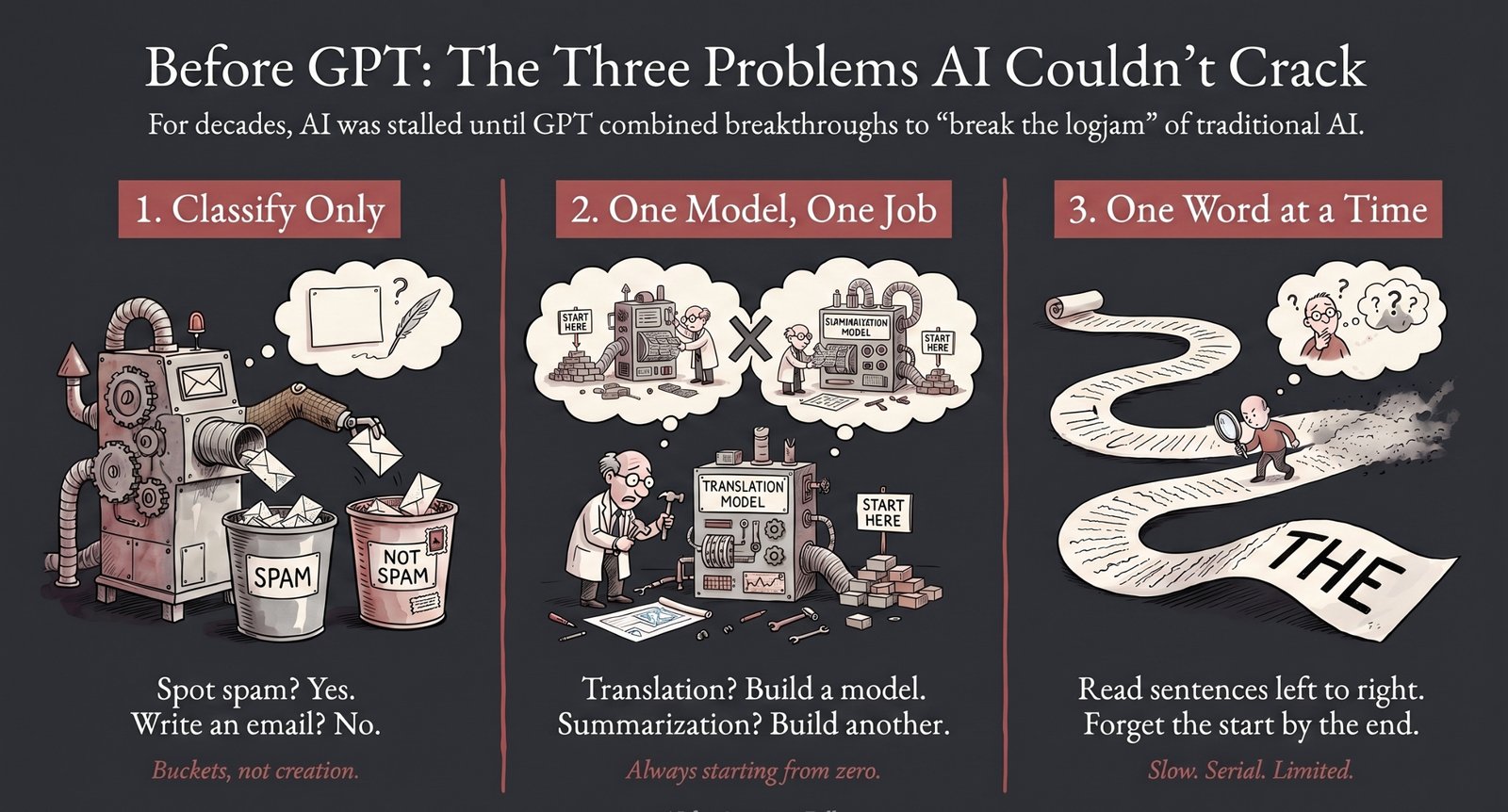

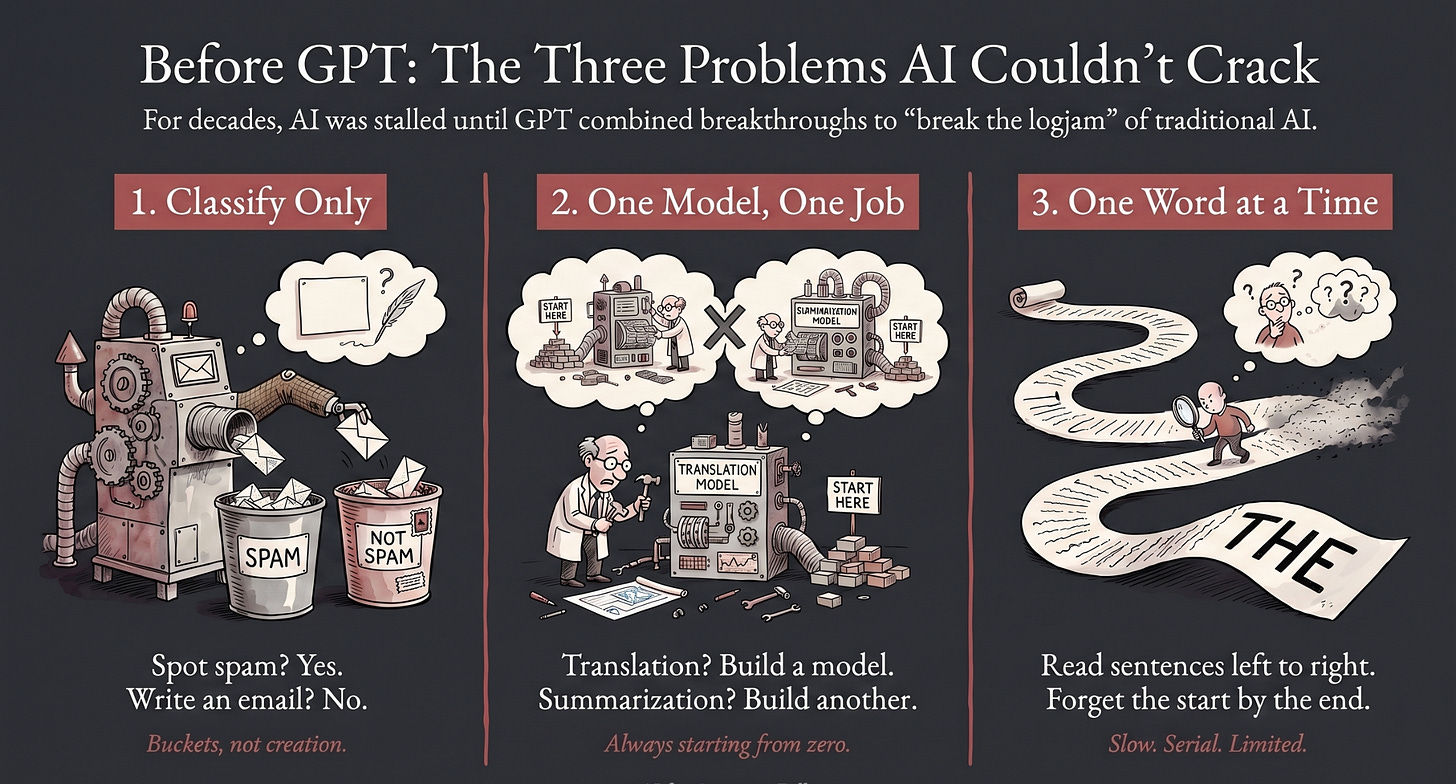

Here’s where it gets interesting. Early AI systems were terrible at long sentences. Tell them a long story and by the end, they’d forgotten the beginning. Like a new hire who nods along in a meeting but can’t connect what was said in minute one to what’s being discussed in minute thirty.

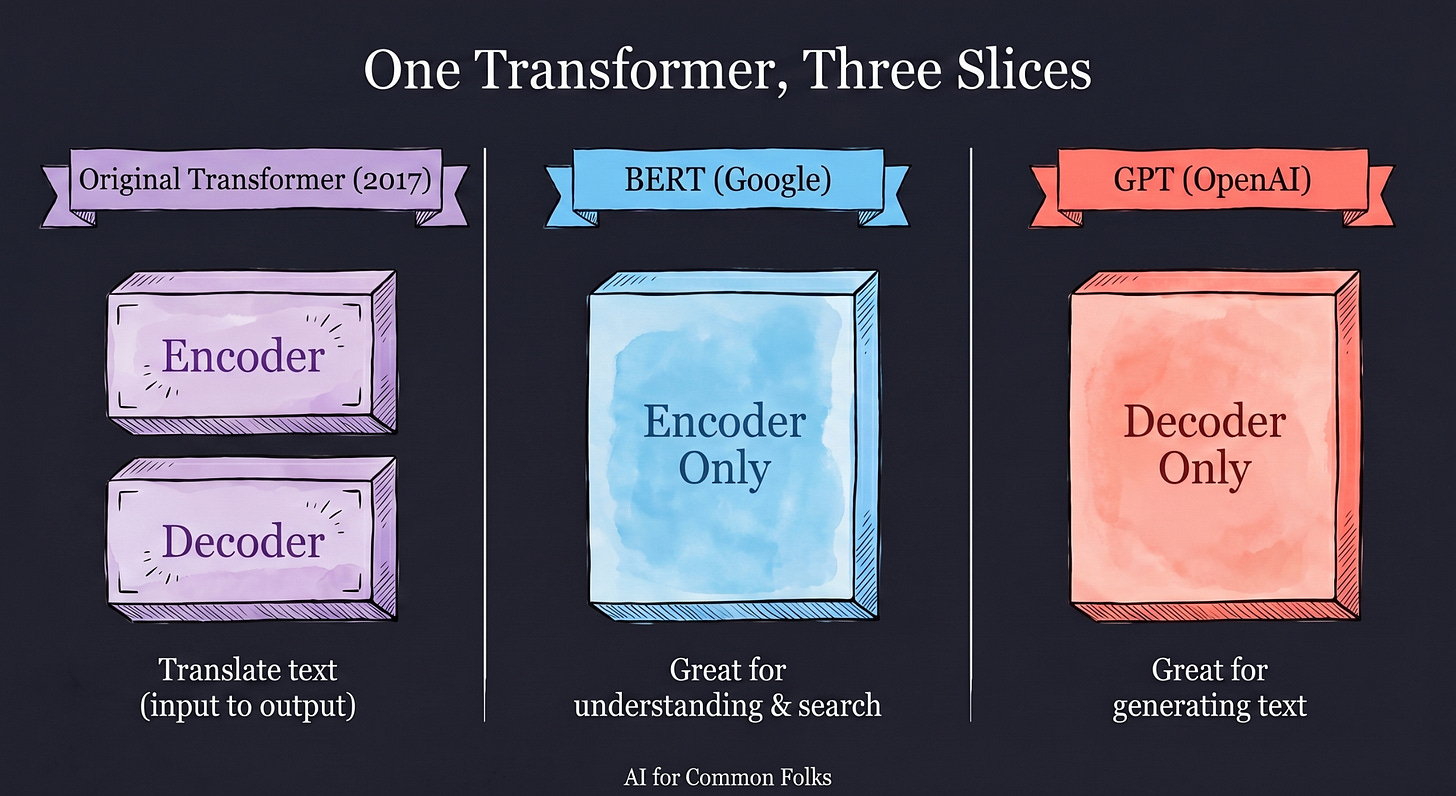

Then in 2017, researchers at Google published a breakthrough called the Transformer. (The “T” in GPT stands for Transformer. That’s how fundamental this is.)

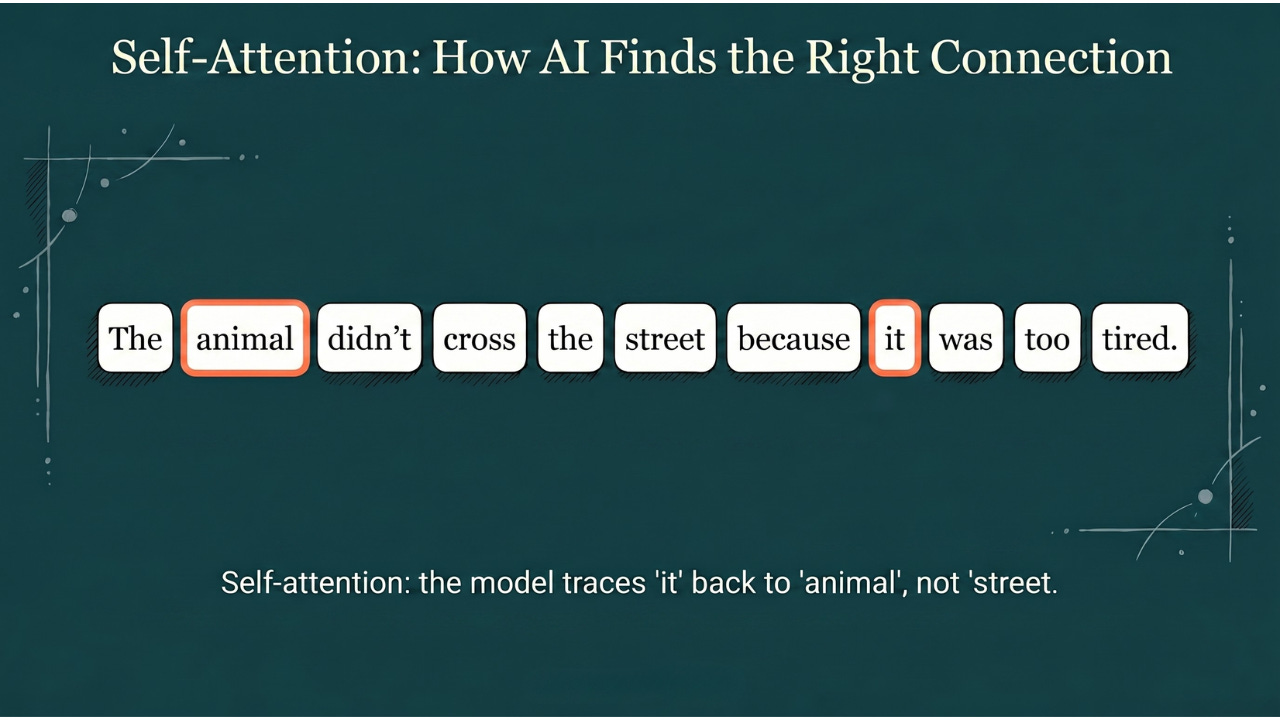

Transformers gave LLMs a superpower called self-attention. Here’s what that means.

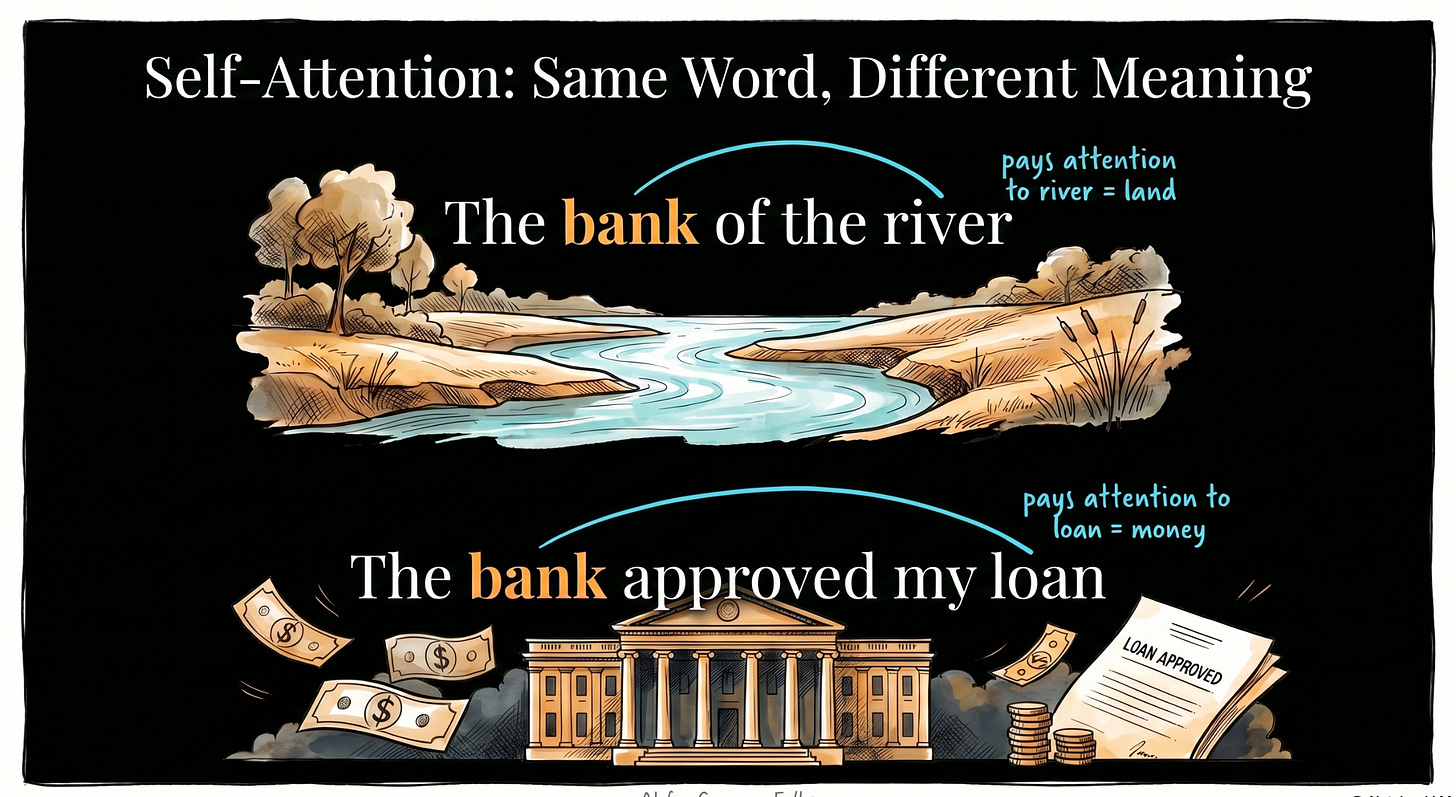

Consider this sentence: “The animal didn’t cross the street because it was too tired.”

What does “it” refer to? The animal or the street?

You know “it” means the animal because the animal is “tired.” Streets don’t get tired.

Before transformers, AI would struggle with this. It read words one by one, left to right, and by the time it got to “it,” the word “animal” was already fading from memory.

Self-attention changed that. Now the LLM looks at all the words in the sentence at once and draws connections between them. When it hits the word “it,” it checks: what does “it” connect to? It sees “tired” and traces back to “animal,” not “street.” It understands the relationship.

Back to our new hire: imagine they’re reading a 50-page email thread where someone says “she approved the budget.” Self-attention is how the new hire traces “she” back to the CFO mentioned 30 emails ago, not the intern mentioned 2 emails ago. They can follow references across long, messy conversations.

This is what lets LLMs understand context, answer follow-up questions, get jokes, and write code that actually makes sense across hundreds of lines. Before transformers, AI was like a new hire reading one word at a time and forgetting the beginning of the email by the end. After transformers, they can hold the entire conversation in their head at once.

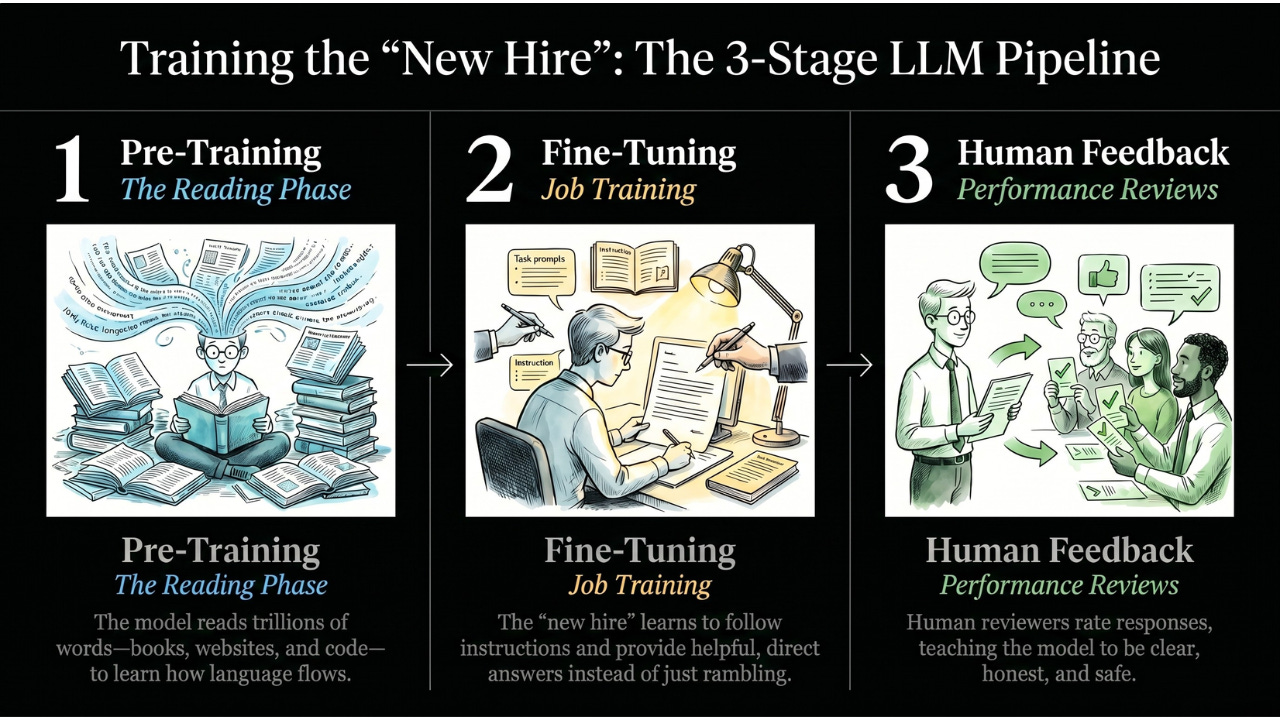

Training the New Hire: Three Stages

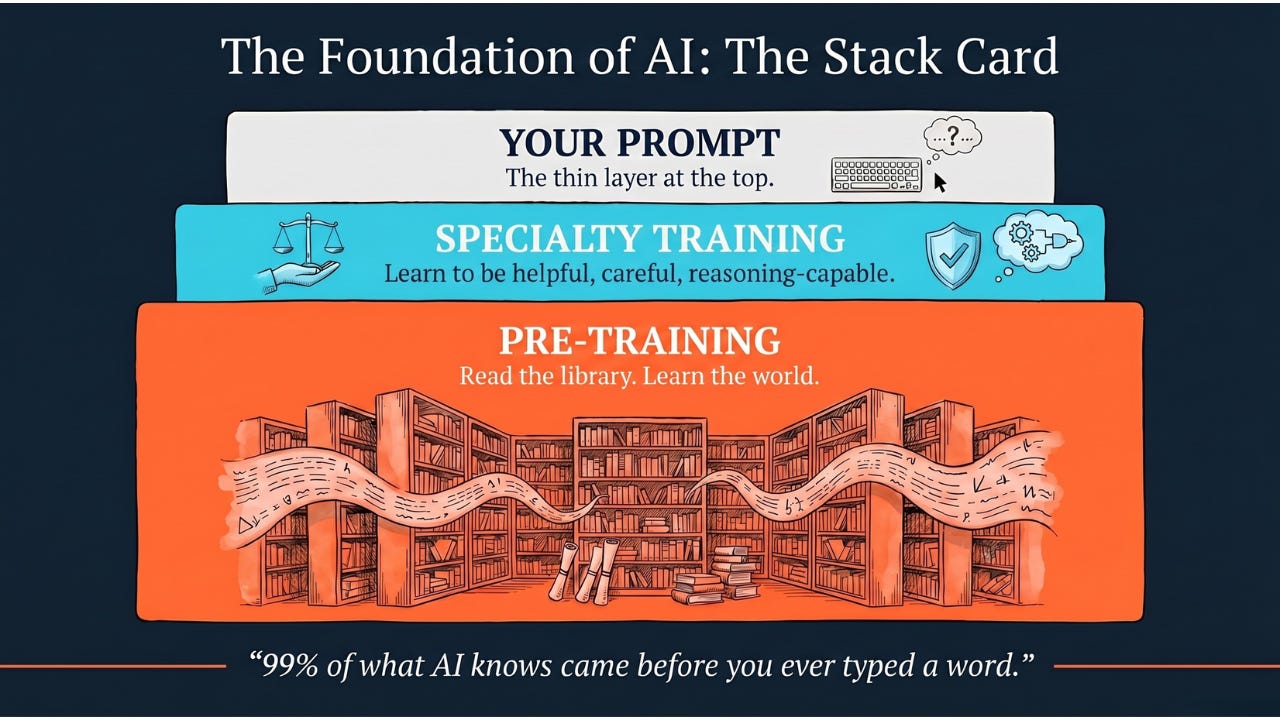

Building an LLM like ChatGPT or Claude isn’t one step. It’s an onboarding process with three stages. Just like any new hire goes through orientation before they’re ready to talk to customers.

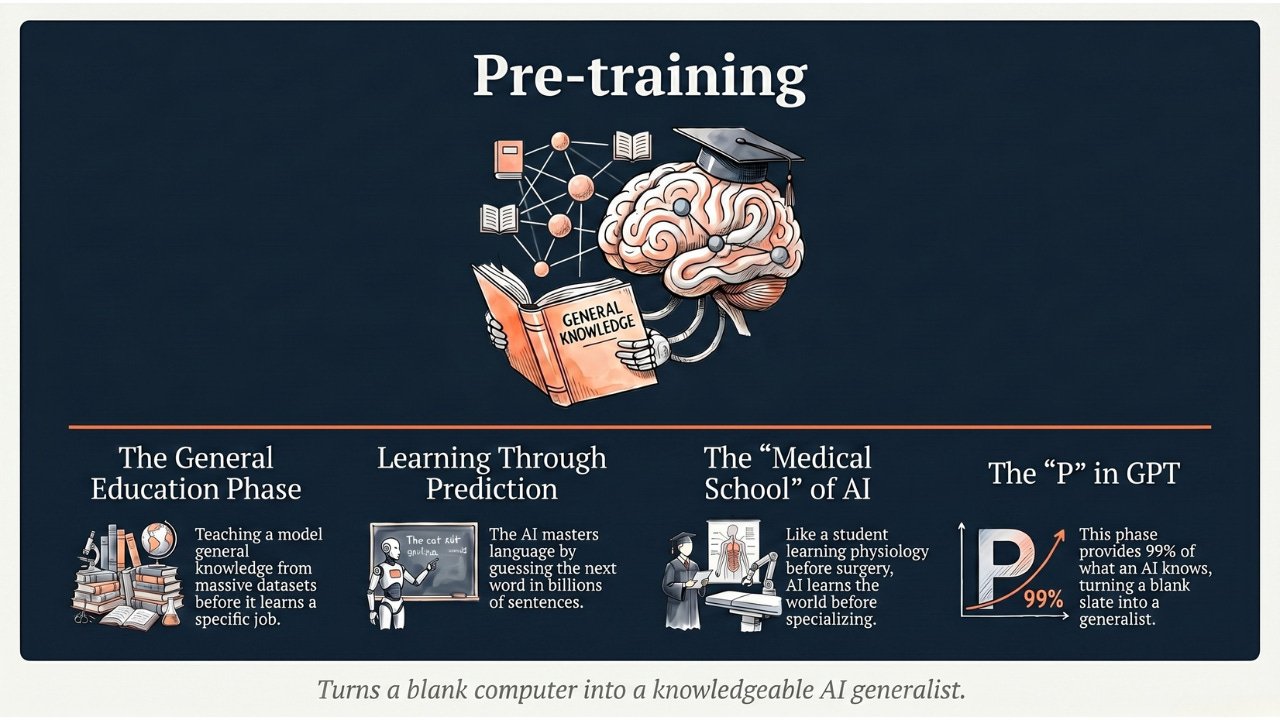

Stage 1: The Reading Phase (Pre-Training)

This is where the new hire reads everything. Terabytes of text. Books, websites, Wikipedia, code, academic papers.

During this phase, the LLM plays a game: we hide a word in a sentence and ask it to guess. If it guesses wrong, it adjusts its internal settings. (Remember the chai analogy? Same loop. Predict, check the error, adjust, repeat. Millions of times.)

Those “internal settings” are called parameters. Think of them as tiny dials. Modern frontier models (GPT-5, Claude 4, Gemini 2) are believed to have trillions of them, though the exact numbers are kept secret. Each dial is like one of the chai recipe settings from our previous article: a small adjustment that slightly changes the output. Together, trillions of tiny dials produce language that sounds remarkably human.

That’s what “Large” means in Large Language Model. Large = trillions of adjustable dials, trained on a massive amount of text.

After pre-training, the new hire knows grammar, facts, writing patterns, coding conventions, and the general structure of human communication. But they’re not helpful yet. Ask them a question and they’ll just keep writing, trying to complete the sentence rather than answer you. They’re like a new hire who’s read the entire company wiki but doesn’t know how to have a normal conversation.

Stage 2: Job Training (Fine-Tuning)

Now we teach the new hire how to actually do their job.

We show them thousands of examples of good conversations:

-

Customer asks: “How do I reset my password?”

-

Good response: “Here are the steps: go to Settings, click Security…”

-

Bad response: “…and also how to reset your username and your profile picture and your billing information and…”

The new hire learns the format: when someone asks a question, give a direct, helpful answer. Don’t ramble. Don’t go off on tangents.

This is fine-tuning. Same new hire, same knowledge from the reading phase, but now they know how to channel it into a helpful conversation instead of an endless monologue.

Stage 3: Performance Reviews (Human Feedback)

The new hire is now having real conversations. But sometimes they’re rude. Sometimes they make things up. Sometimes they give dangerous advice.

So we bring in human reviewers. They chat with the LLM and rate the responses. Helpful and accurate? Thumbs up. Rude, wrong, or harmful? Thumbs down.

The model learns: “Humans prefer it when I’m clear, honest, and careful. They don’t like it when I make things up or lecture them.”

Think of it as ongoing performance reviews. The new hire adjusts their behavior based on what gets positive feedback and what gets complaints.

This last stage is why ChatGPT and Claude feel different from each other even though they’re both LLMs. Different companies hire different reviewers with different values. Same new hire, different management styles, different workplace cultures.

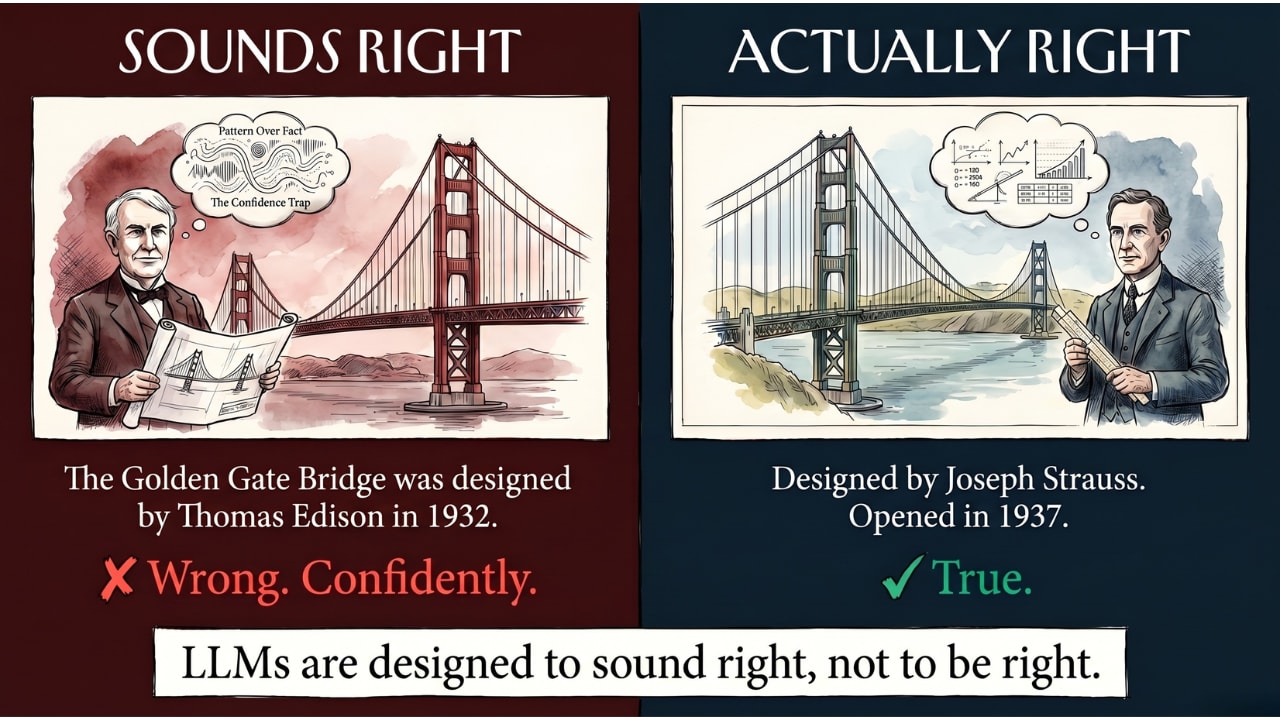

When the New Hire Makes Things Up

Here’s the catch with our new hire. They’re so well-read and so good at sounding confident that sometimes they make things up. And they deliver the fiction with the exact same confidence as the facts.

This is called hallucination.

Remember: the LLM predicts the next most likely word. It doesn’t have a database of facts. It doesn’t “look things up.” It generates text that sounds right based on patterns.

Imagine asking the new hire: “Who designed the Golden Gate Bridge?”

They’ve read enough about bridges and famous people that they might say: “The Golden Gate Bridge was designed by Thomas Edison in 1932.” That sentence is completely wrong. But it sounds like a fact. It has the right structure, the right confidence, the right rhythm of a true statement.

The new hire isn’t lying on purpose. They’re doing what they always do: predicting what the most likely next words would be. And sometimes the most likely-sounding answer isn’t the true answer.

This is the single most important thing to understand about LLMs: they are designed to sound right. Not to be right.

Often they are right, because patterns in language usually reflect reality. But not always. And they’ll never pause and say “Actually, I’m not sure about this.” They’ll just keep predicting the next most confident-sounding word.

Where You Already Use LLMs

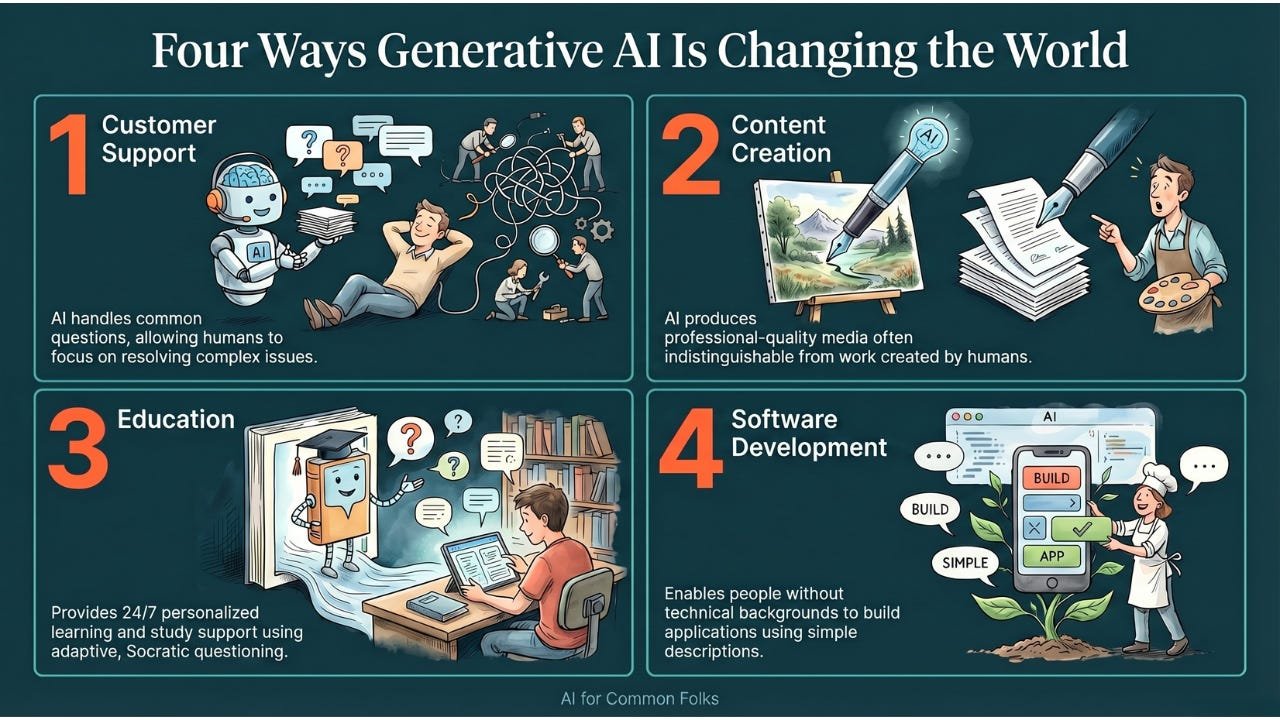

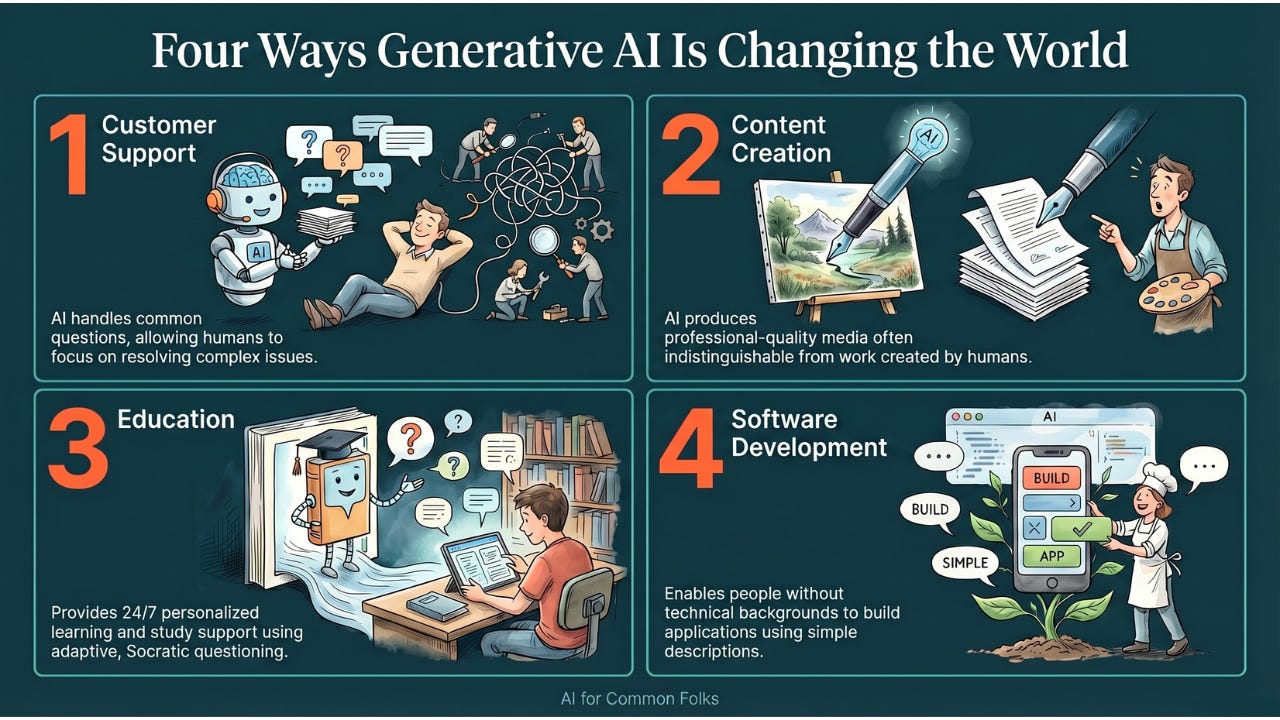

You interact with this new hire more than you realize. They’ve been placed in departments all across your digital life:

-

ChatGPT, Claude, Gemini: The obvious ones. Every conversation is an LLM predicting one word at a time.

-

Email: Gmail’s “Help me write” and Outlook’s Copilot. The new hire is drafting your emails.

-

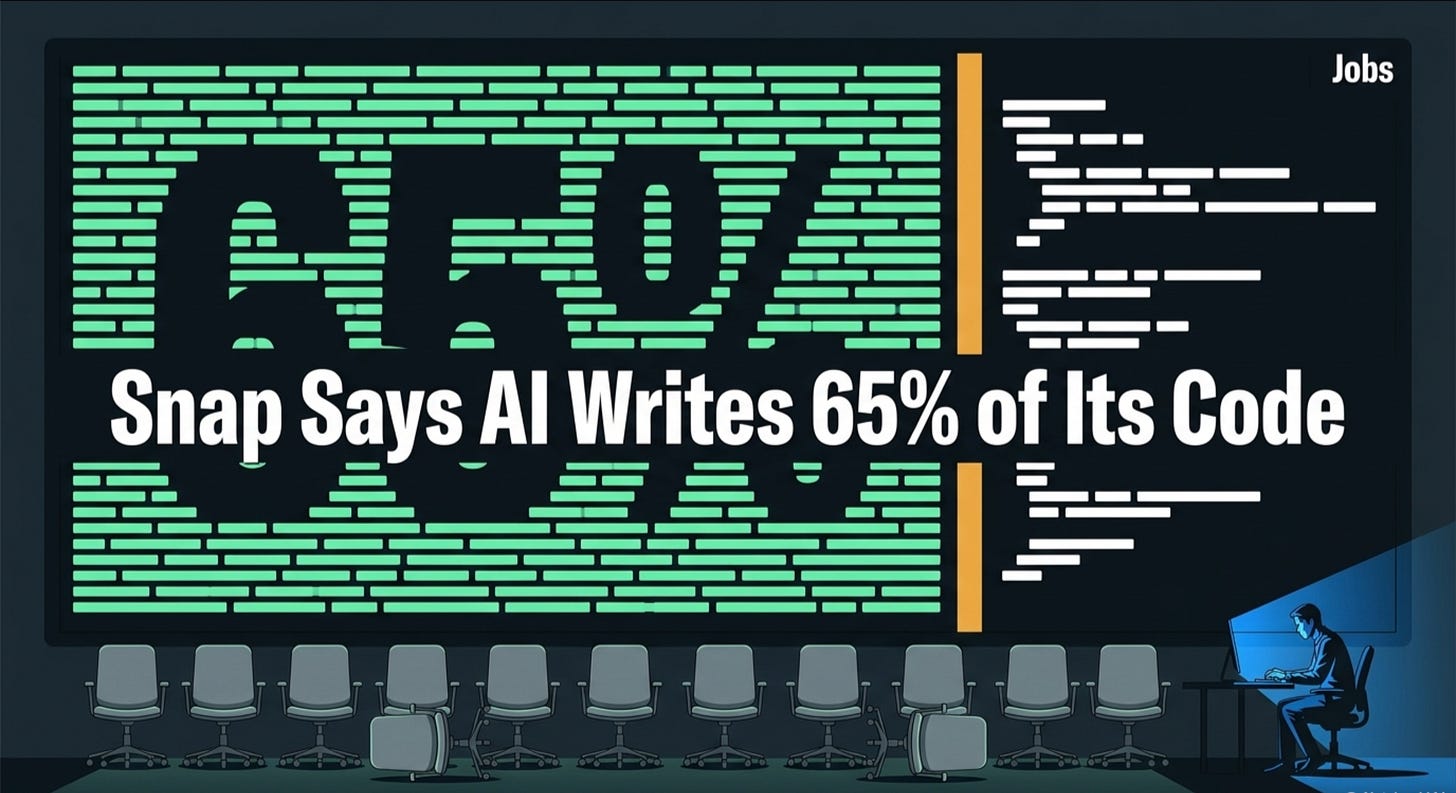

Code: GitHub Copilot suggests code as developers type. The new hire sits next to every programmer.

-

Search: Google and Bing now use LLMs to summarize search results instead of just showing links. The new hire reads all the results and writes you a summary.

-

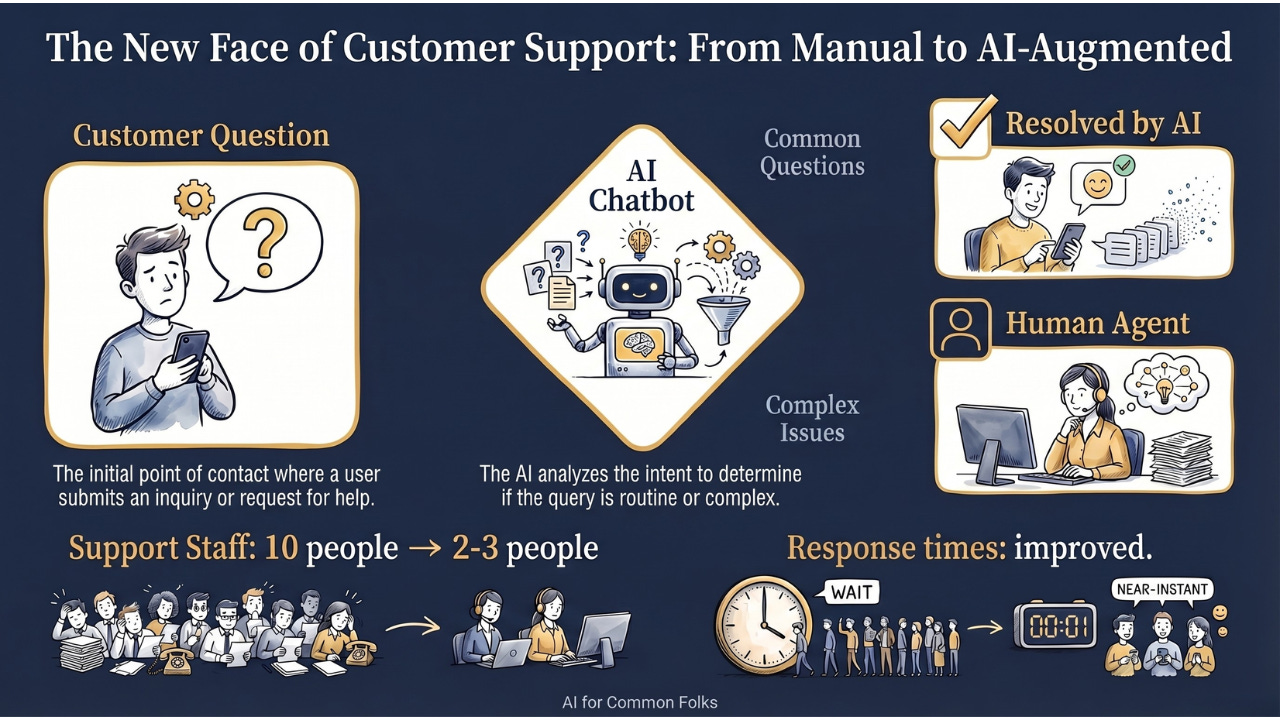

Customer service: Many companies have replaced scripted chatbots with LLM-powered support. The new hire handles your complaints now.

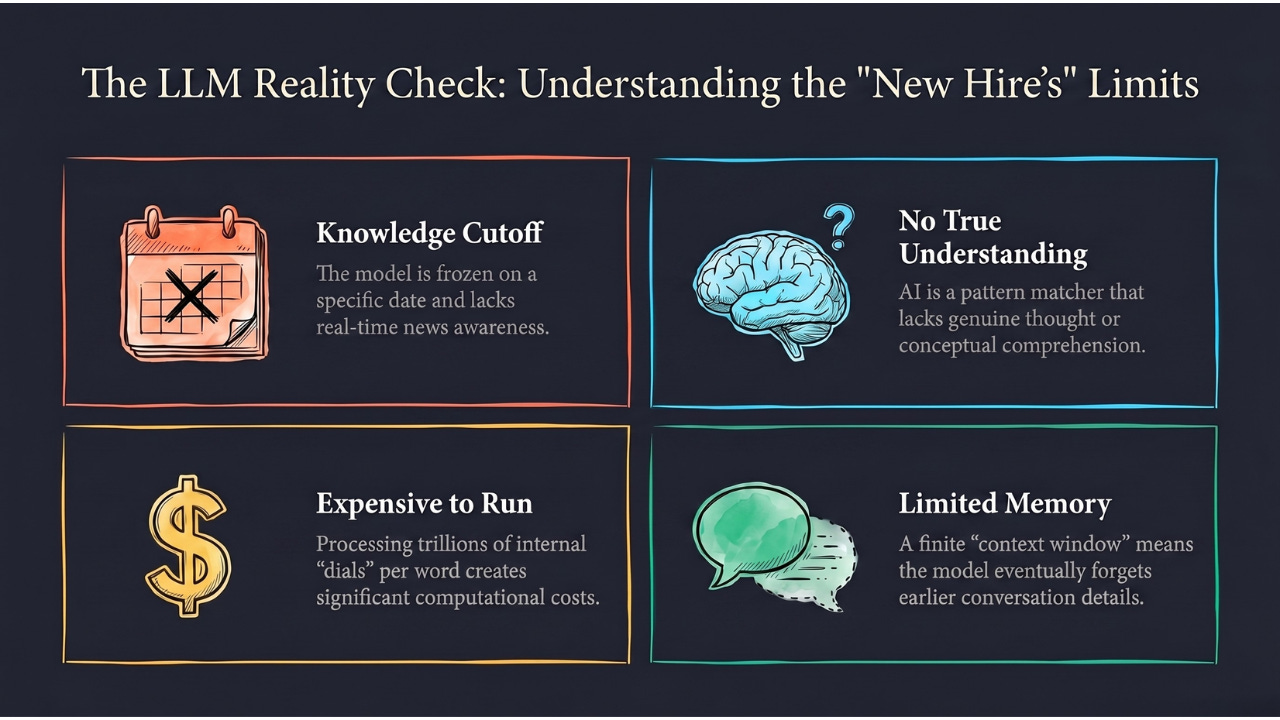

The New Hire’s Limitations (Keeping It Real)

Our new hire is impressive. But they have real weaknesses you should know about:

They stopped reading on a specific date. Every LLM has a knowledge cutoff. Ask about yesterday’s news and they genuinely don’t know. It’s like the new hire read everything up to their start date but hasn’t checked the news since. (Some systems work around this by connecting to the internet, but the core model itself is frozen in time.)

They don’t truly understand. They’re the world’s best pattern matcher, not a thinker. They can sound confident while being completely wrong. They don’t “know” anything the way you know your own name. They know what words usually follow other words. That’s it.

They’re expensive to keep around. Every response costs computing power. That’s why advanced AI access isn’t free. Running a trillion dials for every single word in every single response adds up fast.

Their memory has limits. They can only hold so much of the conversation at once. This is called the context window. It’s like the new hire can remember the last hour of conversation clearly but starts forgetting what was said this morning. Long conversations can feel like the AI forgot what you told them earlier, because in a real sense, it did.

The Takeaway

A Large Language Model is the engine powering the AI revolution you’re living through right now.

It’s a new hire who read the entire internet before day one. They predict the next word, one word at a time, with a confidence that makes it look like understanding. They went through reading (pre-training), job training (fine-tuning), and performance reviews (human feedback) to become the helpful assistant you chat with today.

They’re extraordinary at sounding human. They’re terrible at knowing when they’re wrong. And they’re sitting in more of your apps than you probably realized.

Under the hood, it’s the same loop you learned about in our How AI Actually Learns article. Predict, check, adjust, repeat. Just with trillions of dials instead of four chai settings.

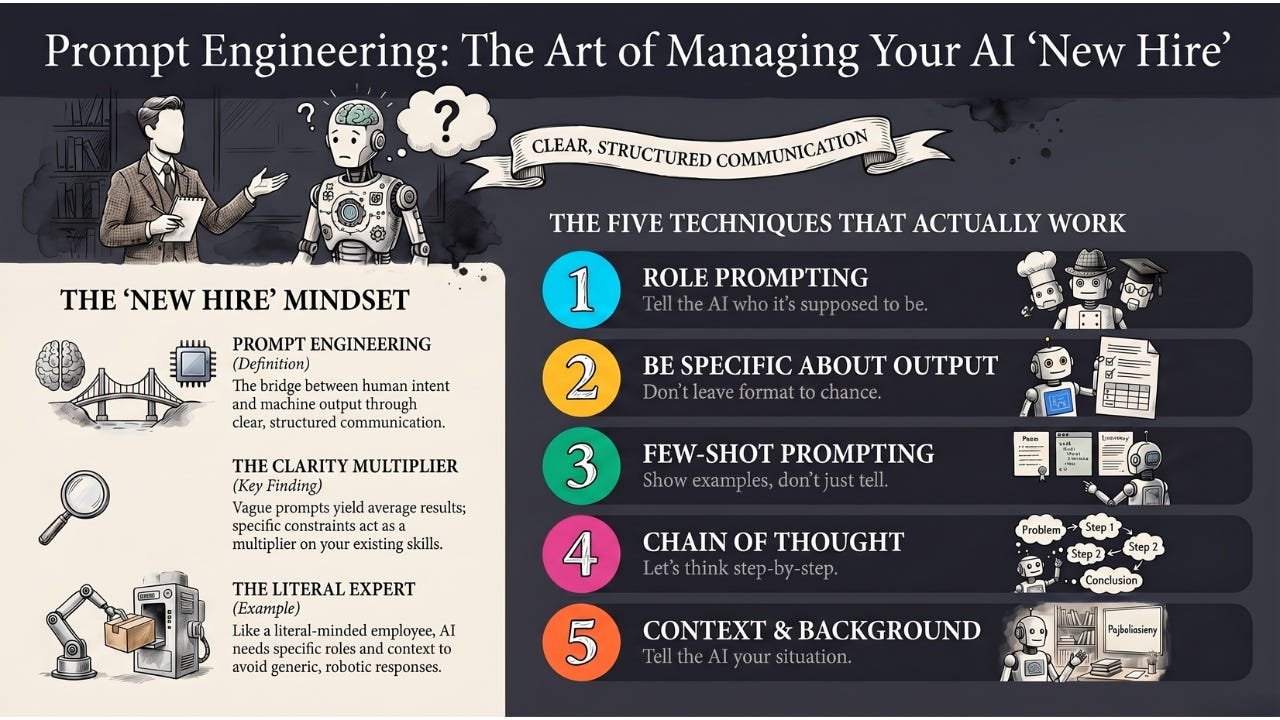

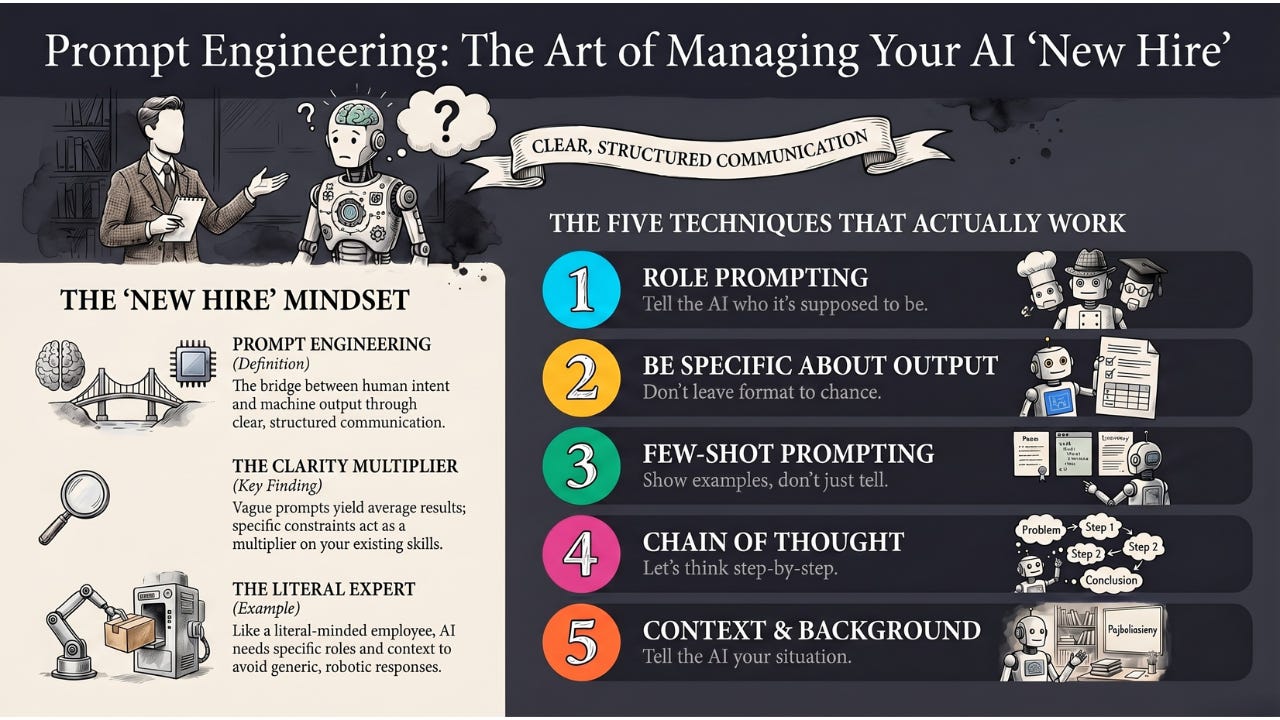

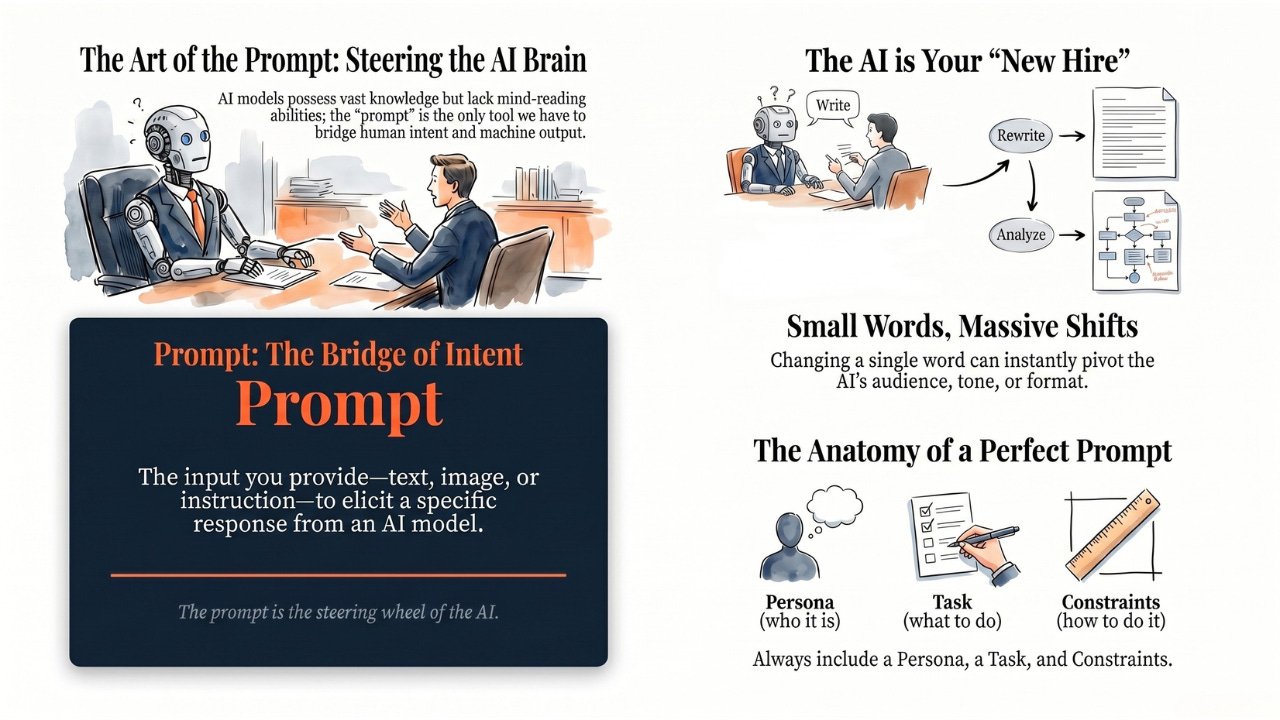

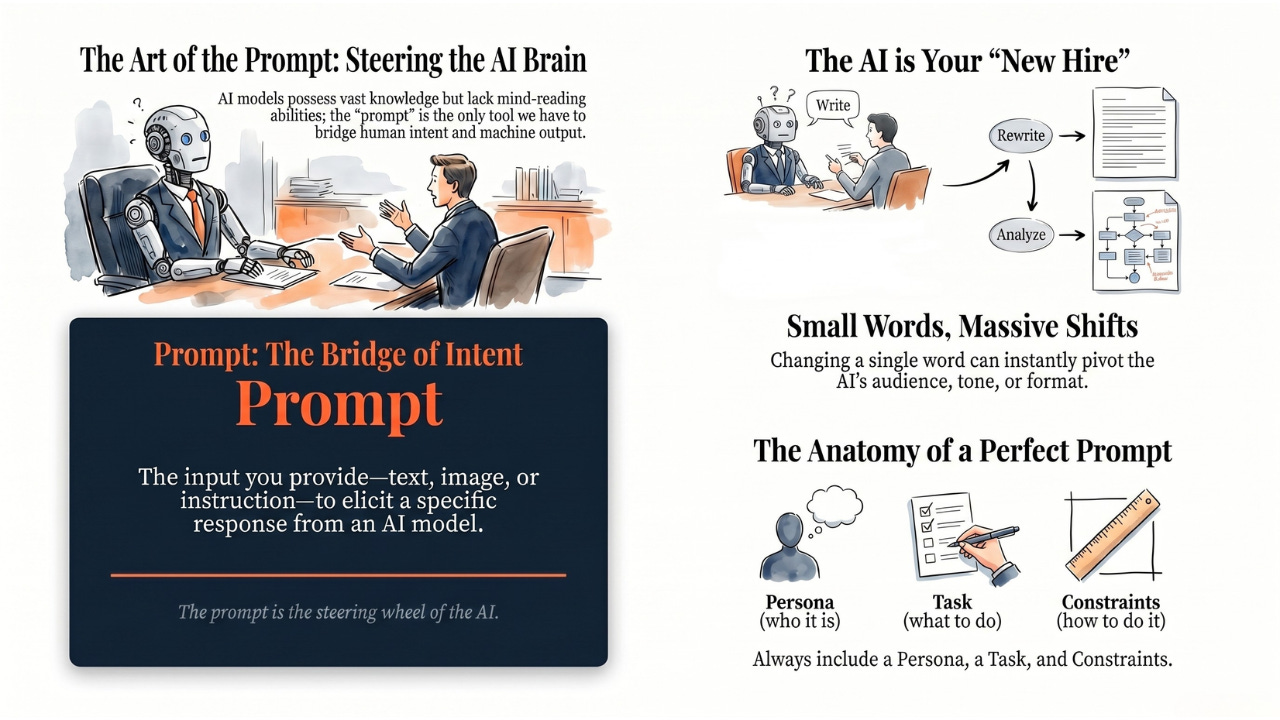

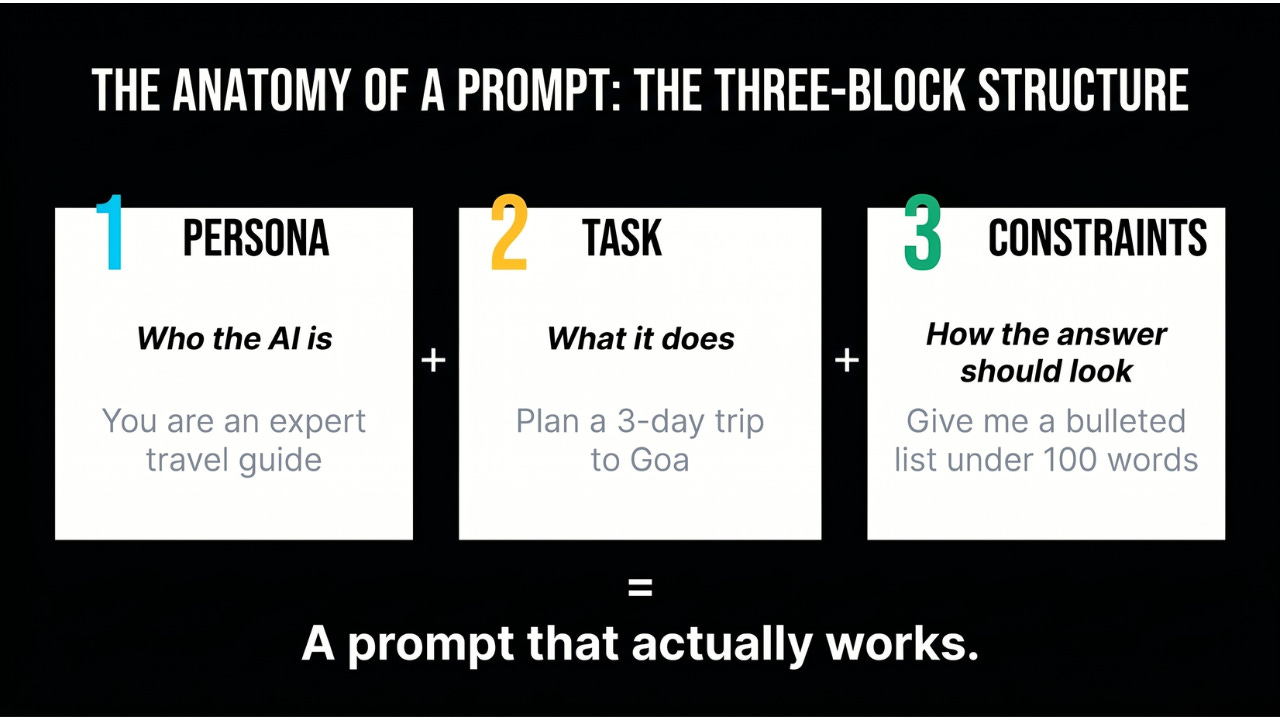

Coming Up

Now you know what the engine is. But here’s a subtle truth: the same LLM can give you a brilliant answer or a useless one depending entirely on how you ask. That little box where you type your question? It has a name — the prompt — and the words you put in it are the steering wheel of the entire engine. Next, we’ll break down what a prompt actually is and why it matters more than most people realize.

AI for Common Folks – Making AI understandable, one concept at a time.

Subscribe now

Leave a comment